Our free sitemap generator not only allows you to build a XML sitemap for Google, Bing and other search engines, but also includes tools that help discover problems that may be preventing your site from ranking well on search results. Best of all, it’s completely free, no limits and nothing to download!

** IMPORTANT ** The SITEMAP GENERATOR respects sessions! If you are logged into your site and have delete privileges, the sitemap generator will follow all links, including ‘delete links’, so play it safe and make sure you are NOT logged into your website!

** IMPORTANT ** The SITEMAP GENERATOR respects sessions! If you are logged into your site and have delete privileges, the sitemap generator will follow all links, including ‘delete links’, so play it safe and make sure you are NOT logged into your website!

Current Version of the XML Sitemap Generator is v2.14. The main focus of this update was on the indexing speed of very large websites and is now 250% faster than the previous version. IF YOU HAVE PROBLEMS running my tool, see my section on using Firefox ESR.

How to Make a Sitemap

For those that want to skip the instructions: XML Sitemap.

At one time, each search engine had their own idea of how a sitemap should be formatted, fortunately a SITEMAPS standard was developed for XML sitemaps that Google, Bing, Yahoo and other SE’s now adhere to.

There is still the traditional html sitemap, and we have taken that into consideration when building the sitemap generator; our webmaster tool provides you with the option to generate a XML sitemap, HTML sitemap, raw list of urls, session report and a html report – how you save (export) the file is up to you.

Before the XML Sitemap, website owners used a HTML sitemap to get their content recognized by search engines, and it still works extremly well! The nice thing about this type of sitemap is that it can help visitors navigate your site while allowing search engines to find your content.

GENERATE A SITEMAP FAST

It’s simple, just enter the website you would like to generate a site map for (found under the ‘settings’ tab) and click the little green arrow to start crawling.

It’s simple, just enter the website you would like to generate a site map for (found under the ‘settings’ tab) and click the little green arrow to start crawling.

The sitemap generator will spider your site using the default settings and give you the option to create a xml sitemap (or html sitemap depending on your need).

Any errors, such as missing pages, duplicate titles or overly large files that may be slowing down your site will be listed for your review.

SITEMAP GENERATOR DETAILS (the manual)

This site map generator (now part of the webmaster tool) is loaded with tons of features and consists of six tabs:

‘Project’ tab – allows you to save and load your sitemap project. This can be very handy when making a XML Sitemap for a large website with a thousands of pages. If you decide to use filters after you have crawled your site, you need to select “New Project” and run it again to obtain your new sitemap. Note: A sitemap project file is NOT the same as XML Sitemap (which is found under the Sitemap Tab).

‘Settings’ tab – allows you to specify the way your site will be spidered and what will be included in your Google or XML sitemaps.

- Project Name – This will be the name of your project

- URL – The full address (including the http://) of the website you would like to create a Google or XML sitemap from

- Filters (case sensitive) – you can tell our tool to include or exclude certain files or content when you generate a sitemap. Regular Expressions are supported when you prefix it with “complex:”.

- Include / Exclude Filter – This is a list of path patterns, asterisk (*) wildcard supported, case sensitive. When the sitemap generator is about to crawl a website, it is validated against all inclusion patterns. If none match, then the location will not be processed and will not be placed into sitemap. If you leave this area empty, then it is assumed that you want to include everything so that all locations will are process being excluded from processing.

- Include / Exclude Content Type Filter – same rules apply as with include/exclude filters and target the type of content.

- Here are some examples of exclude filters I used for my WordPress Sitemap:

*/trackback/*

*/feed/*

*/feed

*/comments/*

*/tag/*

*/author/*

*/wp-content/*

*/wp-json/*

*xmlrpc*

*wp-admin*

*.css

*.xml

*.zip

*.swf

*.jpg

*.jpeg

*.png

*.pdf

complex:.\?.Note: The last complex statement would exclude all pages that start with /shopping/food/ and contain only letters.

- Informal Links Regex – Allows you to search for links that are not standard, such as hidden comment spam that may link out to other sites.

Ex: (?i)[a-zA-Z0-9\-\.]+\.(com|org|net|mil|edu) - Rules – Allows you to set rules when creating your XML, HTML or Google Sitemap.

- Load From – Provides you with the option to process files from the entire server and below the initial directory which is specified by URL parameter. For example, if the URL parameter is a server address, this option does not effect the behavior of the google sitemap generator; However, if you enter a directory, say for example http://www.popupcheck.com/news/index.html, only files below /news directory will be processed including any sub directories.

- Respect Robots.txt file – you can tell the sitemap generator to honor this file or to ignore it.

- Respect Meta Robots.txt – you can do as this meta tag instructs or ignore it.

- Respect No Follow – If the sitemap builder finds a link with a no-follow tag, it will ignore or follow it depending on your selection.

- Ignore invalid links – If you find links that try to back up past your root directory, then you can choose to not include this in your sitemaps.

- Exclude images – Check this. Images will not be included anyway (not used)

- Download only new files – this works when you have a sitemap project that you have saved out

- Case Sensitive URLs – Treat URL’s that have different case as unique

- Skip unmatched non=canonical links – If a page has a canonical url that differs from itself, it will not be included in the sitemap

- Options – Some cool stuff here and can be very important!

- Add skipped links to sitemap – when the webmaster tool crawls your site, it may find bad links. This option allows you to include those in the sitemap anyway.

- User agent – When you spider a site, you leave in the log files the name ‘AuditMyPC Webmaster Tool’ as the browser type. Some web hosting companies may block a browser if it is making too many requests. You can change the referrer (user agent) to something else by selecting from the drop down or typing it in!

- Max Level – This is not the file depth in a directory structure, but 1

+ number of links between this document and root document (project

settings url). For example, if the settings url is set to testingiam.com, then the document level is 1, this links to testingiam.com/level1/ so this page

level=2 and that page links to testingiam.com/level1/level2/level3/level4/level5/level6/level7/ which would = level 3.

‘Crawler’ tab – Set the speed in which the sitemap is generated.

- Request Delay – Our XML sitemap generator works extremely fast, the downside of this is that some internet service providers may find this places a heavy load on the server. If this is the case, then you can place delays between requests.

- Connect Timeout – When building XML sitemaps which encounters pages that don’t load or take too long, a timeout can be set.

- Read Timeout – If the spider finds a page that goes on forever, you can specify a timeout for reading that page.

- Transfer Rate – Each thread can transfer web pages at a very fast rate. You can tone that down a bit if necessary, but the default works fine.

- Thread Count – The number of simultaneous crawling threads to run when creating the Google site map. This may significantly decrease overall crawling time if large number of threads are specified but will increase bandwidth usage – so use with caution or just run with the default.

- Autosave Interval – Tells the sitemap generator to save the project out every X number of minutes – default is don’t save. Change this if you have a vary large site!

- Once you click on the button, crawling will begin and you’ll be presented with status indicators for thread status, uri, values and more. All parameters are self explanatory and ‘Finished’ will appear once crawling is completed. You may stop the sitemap generation at any time by pressing the ‘cancel’ button.

‘Sitemap’ Tab – Contains a ton of information about each page and updates in real time.

all the locations / files that have been crawled. Under the ‘Sitemap’ tab, you have sub-tabs, such as ‘save sitemap’, ‘retry’, ‘row filter’, ‘column filter’ and ‘trees’.

- Export – this is where you decide what type of sitemap you would like to build.

You can choose ‘Sitemap XML (For creating XML sitemaps used by Google, Bing, Yahoo and others) ‘, ‘URL Raw List’, ‘Delimited File’, ‘Session File’, ‘HTML (Sitemap Only – old style sitemap, not a XML sitemap) and a ‘HTML Report’. - Retry Failed – This option will retry to read pages from the sitemap that had problems on the last run

- Row Filter – When building a sitemap (crawling a site), you can filter out rows based on just about anything you can think of (see the question mark next to each item for details). For example, Google released results of what they found when indexing the top sites; one of those metrics is the average size of a web page, which was 312KB, so you could enter 319528 as the length filter (needs to be in Bytes) and find all the pages that Google considers large – and fix them.

- Column Filter – Same as the Row filter but for Columns.

- Find – This allows you to search your xml sitemap for text.

- You have the option to edit ‘Modified’, ‘Change frequency’ and ‘Priority’ cells for each row (or all rows – well get to that in a moment).

- Listing of URLs (pages) – You’ll see a listing of all your web pages that include items like Title, Status, Errors and more.

For the Google sitemap, you can set the ‘Change Frequency’ and ‘Change Priority’ for on or multiple urls by highlighting the desired url(s) and right clicking, then choosing your option. You can also delete page from your Google or XML Sitemap by simply highlighting the desired urls and pressing your computer’s delete key.- Change frequency – Tells Google Sitemaps the frequency that content of a particular URL will change. Your options are “always”, “hourly”, “daily”, “weekly”, “monthly”, “yearly” or “never”. The value “always” should be used to describe documents that change each time they are accessed. The value “never” should be used to describe archived URLs.

- Priority – The priority of a particular URL relative to other pages on your site. You may select between 0.0 and 1.0, where 0.0 identifies the lowest priority page(s) on your website and 1.0 identifies the highest priority page(s) on your website.

‘URL Check’ Tab – This is an important tool to finding out why a page does not load or why Bing, Google, Yahoo and other SE’s are not including it in their index. It’s a great way to Check Server Headers and allows you to modify the request properties!

- URL – Enter the full website address or url that you want more information about

- Request Properties – Enter values to send to the server such as the user agent or referrer. To enter a user agent and referrer when validating a page, simply enter:User-Agent=X

Referer=XWhere X equals the value you want. Here is an example:

Referer: https://www.auditmypc.com

User-Agent: Mozilla/5.0 (compatible; ScoutJet; +http ://www.scoutjet. com/)If you ran the URL check on your site with these settings, your log files would show that the request was made by the blekko bot (scoutjet) and the visitor was referred by the site auditmypc.com

Note: You can use this section of the tool to test security, website behavior and more…

- Save Content – You can also send the server headers and other information to a file or content view. When you click the ‘to content view’ option, then click start and then click the ‘Content’ Tab (next to ‘Request’), you’ll see the content / source of the web page.If you then click ‘Parse document info’, you’ll see a document tree and document info. The document tree is more advanced and can help webmasters discover missing head tags, body tags and more.

The Document Info will show you the title, number of links, meta tags and a listing of all the links found on that page.

‘System Information’ Tab – Shows you how much memory Sitemap Generator (Java) has available for use. If you are checking links or building a XML Sitemap for a large site, you’ll want to allocate more memory. Allocating more memory is as simple as issuing a command – see this 60 second video on how to increase Java memory for more information

To increase the memory available to Java, simply add the parameter –XmxNNNm, where NNN is ½ of your total conventional memory in megabytes. On Windows, this is done through Control Panel -> Java -> Java tab -> Java Applet Runtime Settings -> View.

For example, say that you are running the link checker on website containing 500,000 pages, simply type “-Xmx512m” in “Java Runtime Parameters” field (provided you have at least a total memory of 1GB – on average, you can go up to half of your computer memory).

Create the Sitemap – Export the Sitemap XML file.

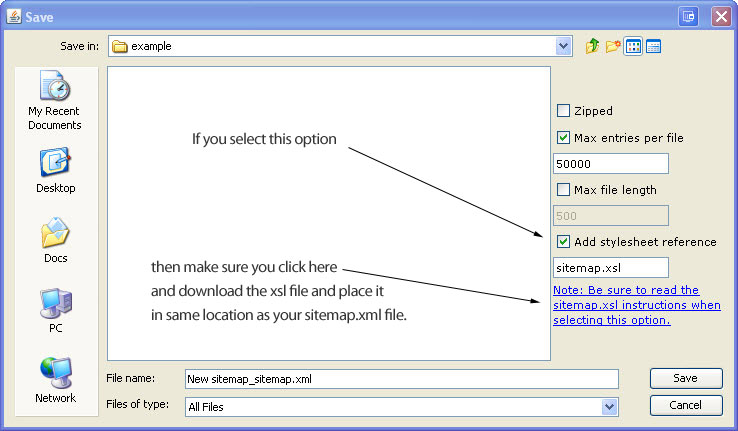

Once the sitemap generator is finished crawling your website you need to export the sitemap file for search engines. Simply select the SITEMAP tab, then EXPORT, then SITEMAP XML. If you go with the defaults, it will save a sitemap called “New sitemap_sitemap.xml” into your default folder (usually your “My Documents” folder. Once you have the sitemap file, simply upload it (ftp, transfer) it to your website’s main folder and let the search engines know the location.

Note: if you add a stylesheet reference (doesn’t matter to the bots, but looks great and easy to read), then you’ll need the following sitemap.xsl (it’s zipped up) file placed in the same location as you place your sitemap.xml file)

Google Sitemap Generator – How to Submit Sitemap to Google

- When the sitemap generator has completed the crawling process, select Export under the Sitemaps Tab and choose Sitemap XML

- Enter the filename you would like to save the sitemap as and click save (the default is sitemaps.xml which is fine)

- upload the new sitemap to your website. It seems every hosting company has a different method of doing this, but they are all basically the same – Think of your sitemap.xml file as any htm (html, php or asp) file that you’re going to place on your website. There is probably some type of import option that your hosting company provides you – use it to move (FTP, Publish, etc) the sitemap.xml file from your computer onto your website. Place it in the same directory that holds the main page for your website.

- Log into your Google Sitemaps account by visiting Google Sitemaps Account.

- Click on the “Add a Sitemap” link.

- Enter the URL for your Sitemap in the field, then click the [Submit URL] button.

- Example: The URL for your Sitemap will be your website address, followed by the filename that you uploaded. For example, if I uploaded my ‘sitemap.xml’ file to my auditmypc.com site, the URL I would give to Google Sitemaps would be https://www.auditmypc.com/sitemap.xml

This will submit your Sitemap to the Google service. It may take Google a few hours to generate reports about your site, so be patient while they work their mojo.

Bing Sitemap Generator – How to Submit Sitemap to Bing

- Create the sitemap as normal using our Sitemap Generator

- Click the ‘Save Sitemap’ tab located under the ‘Sitemap’ tab

- Select ‘Sitemap XML’ to save it out as the name you would like and then upload the sitemap to your website

- Submit your XML File to Bing Webmaster Home

Yahoo Sitemap Generator – How to Submit Sitemap to Yahoo

- Create the sitemap as normal using our Sitemap Generator

- Click the ‘Save Sitemap’ tab located under the ‘Sitemap’ tab

- Select ‘Sitemap XML’ to save it out as the name you would like and then upload the sitemap to your website

- Submit your XML File to Yahoo Site Explorer – UPDATE: No Longer in service

- You can provide Yahoo Sitemaps with a feed in the many formats other than XML (stick with XML).

- RSS 0.9, RSS 1.0 or RSS 2.0, for example, CNN Top Stories

- Sitemaps, as documented on sitemaps.org

- Atom 0.3, Atom 1.0, for example, Yahoo! Search Blog

- A text file containing a list of URLs, each URL at the start of a new line. The filename of the URL list file must be urllist.txt; for a compressed file the name must be urllist.txt.gz.

XML Sitemap in Robots.txt File

Don’t want to have an account with each search engine to submit your sitemaps to? There is a solution! You can put the location of your sitemap inside a ROBOTS.TXT file. Every search engine will read your robots.txt file before crawling your site and if it see this line:

Sitemap: [website address]/sitemap_location.xml

then it will find your sitemap without your having to do anything else.

Here is an example of a robots.txt file, which you can use if you don’t already have one:

User-agent: *

Disallow:

Sitemap: https://www.auditmypc.com/mynewsitemap.xml

Simply replace my location with your information.

Benefits of this Online Sitemap Generator over other Sitemap Tools

One of the major advantages of using this tool is that owners of websites find errors they never knew existed on their sites! WordPress, Joomla, Drupal, phpBB and other content management systems all have sitemap programs you can add onto the system, but these sitemap generators read from the database, NOT from the outside; although faster, they miss errors that can only be seen from an outside crawl – these errors most often prevent sites from being indexed properly by Google, Bing, Yahoo and others! Once fixed, website owners usually notice a major increase in search engine traffic!

Let me give you a real life example – It starts off with trying to make a sitemap for Google and discovering that the sitemap generator simply stops at the main page and doesn’t find other pages within the site.

It is this very problem that people often write to me about. Almost always, after reviewing their site, I discover web pages that are missing beginning or ending tags, such as html, head and body tags.

I also discover that a large number of site owners are accidentally blocking robots from visiting their page. In the sitemap builder, you’ll notice that there is an option to honor robots.txt files and no follow tags.

If you’re having a problem with the sitemap builder and your page is formatted correctly, try deselecting the robots and no follow options. If this works, then the problem is with one of these items.

Every attempt has been made to make our webmaster tool behave as the search engine robots do when spidering a site. There are standards that each search engine subscribes to when reading websites and we subscribe to that same data. The point is, if we catch the errors, it’s a very real possibility that the search engines will also.

A perfect example would be a hosting company blocking our spider because it’s going to fast – chances are, the hosting provider is also doing this to the search engine robots and could be preventing them from seeing your entire site (which could lead to poor ratings). – See changing user agent above for solutions to this problem.

Common Sitemap Builder Problems

Problem: I click the image / link to run the sitemap generator but nothing happens. All I see is a page with a few links including a link to donate a cup of chai for offering such a cool tool, which I’d be happy to do if it worked :)

Chances are you’re not running Java or have an old version. Java is free and you can check your version at java.com/en/download/installed.jsp – once java is running, you’ll see the program and fall in love :)

Problem: You have created a sitemap but it picked up hidden files which you don’t want the search engines to see, so you deleted them from the sitemap.xml file, but the search engines still see the files.

Solution: If the sitemap builder can find these pages that you think are hidden, then so can the search engines. Sure, you can exclude them from the sitemap.xml, but the problem is that you are linking to these hidden files from one of your webpages. Click on the plus next to that URL under the Sitemap Tab of our generator and you’ll find all the url linking to that hidden file.

If want to exclude the files rather than hide them, you can exclude them in your robots.txt file. My sitemap builder will respect the robots.txt file (obey it), just like the search engines and prevent them from being included in the site map. Note: Not all search engines respect your robots.txt file and may look at the url regardless.

Problem: You enter your website address and nothing happens.

Solution: Is that really your website’s main page? For example, you might have entered http://[yoursite.com] as the address, but if you type this in the browser and you end up at http://www.mysite.com/index.shtm, then you have a landing page that is different than your website address.

In this case, you would enter http://[yoursite.com]/index.shtm as the site address.

Problem: I want to exclude images and css files.

Solution: Check the ‘Exclude Images’ and enter *.css as an exclude filter or enter the following in the exclude area:

*.jpg

*.bmp

*.gif

*.tiff

*.css

Problem: You want to capture only urls that in the men sub-directory containing only numbers, letters and a forward slash:

http://[yoursite.com]/shopping/men/casual/21/2

http://[yoursite.com]/shopping/men/sports/soccer

but not:

http://[yoursite.com]/shopping/men/sports-2 (has a dash)

Solution: Use a regex expression by prefixing it with ‘complex:’, for example:

complex:http://[yoursite.com]/shopping/men/[A-Za-z0-9/]*$About regex:

- Entire url (including protocol and host) matched against pattern, for example:

complex:http://[yoursite.com]/shopping/men/[A-Za-z0-9/]*$- Pages filtered out are not processed, i.e. lets say we have root page that references page A, which, in turn, references page B, and page A doesn’t match filter rules, then you’ll will never reach page B.

- ** Quick Reference **

[A-Za-z0-9] = Alphanumeric characters

[A-Za-z0-9_] = Alphanumeric characters plus “_”

[^A-Za-z0-9_] = Non-word characters

[A-Za-z] = Alphabetic characters

[ \t] = Space and tab

[\x00-\x1F\x7F] = Control characters

[0-9] = Digits

[^0-9] = Non-digits

[\x21-\x7E] = Visible characters

[a-z] = Lowercase letters

[\x20-\x7E] = Visible characters and spaces

[-!”#$%&'()*+,./:;<=>?@[\\\]^_`{|}~] = Punctuation characters

[ \t\r\n\v\f] = Whitespace characters

[^ \t\r\n\v\f] = Non-whitespace characters

[A-Z] = Uppercase letters

[A-Fa-f0-9] = Hexadecimal digits- + Match one or more of the previous items (previous character) so, the expression Rob+in would return Robin, Robbin, and Robbbbin. Alternatively, you can build a list of Previous Items by using square brackets. Like this: [abc]+ This will return a, ab, cab, c, b, bbbb, etc.

- The carat (^) matches the beginning of the document. Applying ^a to abc matches a but ^b would not match because it doesn’t start with b

- The dollar sign ($) matches the end of the document.

- A backslash (\) followed by any special character matches the literal character itself, that is, the backslash escapes the special character.

- The # and – characters must be escaped in expressions (## –) just as though they were special characters.

- A period (.) matches any character, including a new line.

- A asterisk (*) matches 0 or more of the preceding character (note that it will not be able to match an ending forward slash but period will).

Problem: You have a WordPress site and want to exclude shortlinks (like testingiam.com/?p=31) from your xml sitemap.

Solution: Use a regex expression on one of the lines in the exclude url section.

complex:.\?.

The command above will exclude any url with a ? in it.

Problem: Site Map Generation slows down after 20,000 pages

Solution: Some webmasters have noticed that during a crawl of a very large site, the sitemap generator may slow down after spidering about 14,000 urls. This can happen if the site is heavily nested or has a complex linking structure.

People who experience this lag usually have rapid applet memory consumption and need to increase the amount. The site map builder by default is limited to 50-100m which can quickly be consumed on a complex web site.

To solve this problem, you can increase the amount of memory used by the site map builder. Simply navigate to the control panel and click on the Java Icon. Then, inside the Java Control Panel, click on the Java Tab, Java Applet Runtime Settings, View and then in the Java Runtime Parameters cell, enter ‘-Xmx256m’.

You can take it a step further when building a sitemap (if you’re still having problems) and enter ‘-Xmx512m’.

Problem: You enter your website address and the site map builder stops immediately.

Solution: This is caused because your main page is redirected to another page (landing page). For example, you may have yoursite.com being redirected to yoursite.com/sales/products/sindex.htm

If this happens to you, simply enter your website address into your browser and notice where you are redirected to; take that redirected website address and enter it into the sitemap generator.

In the example I used above, you would enter:

yoursite.com/sales/products/sindex.htm

into the sitemap generator.

Problem: The sitemap generator find JPG files even though you’ve ticked the “exclude images” option.

Solution: Add the extension to the exclude filter as well, such as *.JPG

Problem: The sitemap generator misses a few or many files.

Solution: If you are having problems building a sitemap, it may be due to your Robots.txt file or your Metatag. Try unchecking the Follow robots.txt rules and/ or meta name robots rules.

Problem: I can’t see the webmaster tool graphic button, so I can’t start the test.

Solution: If that’s the case, then your browser settings may be preventing sitemap generation.

In IE, look under Tools, Internet Options, Security, Custom Level, Scripting of Java applets and choose prompt. Active scripting should be enabled as well.

In Firefox, look under tools, options, web features and make sure the Enable Java and JavaScript is selected.

If after trying these you still have a problem, please let me know and I will do my best to get you up and running.

Problem: Google Sitemap Invalid Date Error Message

Solution: If you get an ‘invalid date’ when you submit your sitemap, check to make sure that the time in not in the future. A common mistake is to not to account for daylight savings when creating the sitemap, so make sure you use the time zone for your server and not the local timezone.

Note: This sitemap generator runs on your PC and not the server.

Problem: Only one url (page / website address) shows in the sitemap generator and I know I have hundreds of pages?

Solution: Open your browser and visit your website’s main page. When you see the main page in the browser, copy the website address and paste that address into the sitemap generator URL field under the settings tab. Don’t type it in, copy and past the entire address just as it appears and you’ll be all set.

When my tool builds a sitemap, it needs a valid starting url. Chances are, you have given it a url that is a redirect. For example, if I gave it http://AuditMyPC.com, it would stop, it needs https://www.auditmypc.com (auditmypc.com redirects to www.auditmypc.com).

How to Run my Sitemap Generator/Webmaster Tool

Firefox, after version 5.2, has disabled support for Java Apps in the standard version of their browser. However, the version most government agencies, universities and other large organizations use, is Firefox ESR (Extended Support Release). There is a 32 bit and 64 bit version, you’ll want to use the 32 bit version. It works great and will allow you to run my sitemap generator – trust me, it’s a small price to pay to discover the problems my sitemap generator finds with your website.

Here are the steps…

1) Search Google for Firefox ESR

2) Click the download button

3) Select the version for your language, but do not choose the 64 bit version.

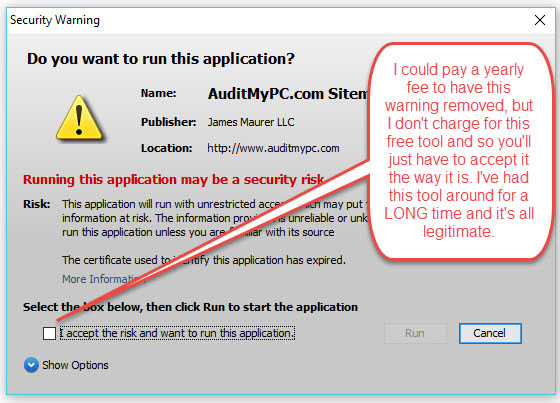

4) Revisit my website’s sitemap generator page and click the accept button when you get a security warning.

I could have paid for a certificate so this message would not appear as I have in the past, but I do not charge visitors for my sitemap generator/webmaster tool, so I’m no longer paying the fee. You either trust me or you don’t, it is entirely up to you. I have had this website for almost two decades now and can read more on my about page.

Like my Sitemap Generator?

![]() Like our sitemap builder? Please let others know by displaying this icon. Simply copy and paste the code snippet below onto your webpage:

Like our sitemap builder? Please let others know by displaying this icon. Simply copy and paste the code snippet below onto your webpage:

<a href=”https://www.auditmypc.com/free-sitemap-generator.asp” target=”_blank”><img alt=”Sitemap Generator” src=”https://www.auditmypc.com/images/sitemap-generator-80×15.gif” width=”80″ height=”15″ border=”0″ /></a>

Or, perhaps this XML Generator icon

![]() XML Sitemaps – 1kb at 80 x 15 in .gif format.

XML Sitemaps – 1kb at 80 x 15 in .gif format.

<a href=”https://www.auditmypc.com/free-sitemap-generator.asp” target=”_blank”><img alt=”XML Sitemap Generator” src=”https://www.auditmypc.com/myicons/xml-sitemap-generator.gif” width=”80″ height=”15″ border=”0″ /></a>